The Manning Centre released a report this week, "Growing the Democratic Toolbox: City Council Vote Tracking".

The first thing about this that I find interesting is that this appears to be a sign that the Manning Centre is going to start positioning itself in a manner similar to the Fraser Institute, which has spent much of the last twenty years spouting ideologically slanted "research" as if it is somehow unbiased and logical. The fact that this report's release coincides with the launch of the "Common Sense Calgary" campaign and the launch of Calgary's civic election suggests that there is an attempt to attack the current city council incumbents who don't align well with the Conservative cabal that wants to control all levels of politics.

The report is lengthy - some 38 pages, and filled with lots of statistical analysis of council meeting minutes. It is filled with lots of "pretty graphs", but graphs seldom tell the whole story - in fact, statistics themselves have proven to be a somewhat suspect tool in analyzing politics in general.

[More Detailed Analysis After The Jump]

The writing of this report is intentionally oblique. There are regular allusions to "factions" on council, but the members and nature of these factions are never laid out. It is filled with graphs that imply things, but never really provide any informative value.

The obliqueness serves a purpose. It enables the Manning Centre to claim that it is "non-partisan" and "unbiased". However, like any ideological think-tank operation, the Manning Centre (which, for the purposes of my writing includes the "Manning Foundation") has a known bias, and a desire to forward a particular worldview. The challenge then is to understand how the report forwards the Manning Centre's agenda without being explicit about it.

From the front page, Key Findings section, we find the following:

It would not surprise me at all if the Manning Centre, among others, would love to see the injection of party politics into civic level politics. This already happens in the United States, and the emergence of slates and the like no doubt are the beginning stages of that kind of polarization.

The last statement of the paragraph is also interesting "An initiative such as this may offer the best of both worlds by helping to understand how independent candidates vote on the issues". The wording is again somewhat ambiguous. Is the "initiative" being referred to the report itself, or is it the creation of slates and party-like structures? It could be either, but I will be charitable and assume that it is the report author referring to the report itself.

This list of key findings is an interesting mix of content. On one hand, it tries to play the scare card by leading off with the amount of council business that is done "behind closed doors". Frankly, having been a manager with significant responsibilities in the past, it is no surprise to me that the topics involved are done behind closed doors. Doing otherwise could negatively impact non-council stakeholders in the discussions such as companies bidding to do work.

If less than 20% of council business falls into that category, I'd say that's pretty good. Given that at the Federal level, Harper has driven almost all committee business behind closed doors, and he has reduced debates over legislation to almost meaningless pro forma acts. Far more happens in Ottawa when Parliament isn't sitting these days (under the guise of "order in council").

The second conclusion I find particularly ridiculous. The first point being that none of the data analysis presented further on in the report substantiates the claim. There are lots of graphs later on in the report, but none of the data breaks out voting on spending related subjects from other council business.

Items 3, 4 and 5 allude to the presence of "blocks" or "factions" on council. Some of the subsequent analysis attempts to demonstrate these voting blocks, but does so in a manner that is intended to make it very difficult to actually identify who is aligning with whom.

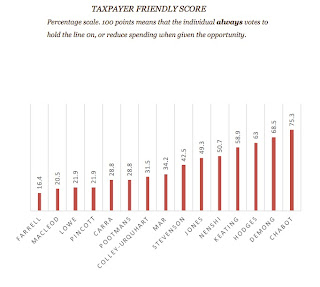

Delving into the data analysis, things get a little more complicated, and the use of weighting and words to influence the way that the reader would interpret the results becomes important. Consider the following graph regarding spending motions:

This is yet another piece of statistical nonsense. It makes the supposition that being on the "winning" side of a vote is somehow "success". Again, it tells us nothing about the motions involved, and whether or not those motions were appropriate or not. If the motions were predominantly about regulating the number of goldfish allowed in a residence, who the heck cares? Being on the "winning" side of a vote is ultimately irrelevant - the question is whether or not that vote was important, and the vote cast actually represented the wishes of their constituents.

If, for example, Demong voted according to the wishes of his constituency consistently, what does it matter if he was on the "winning side" only 45.2% of the time?

You would almost hope that this would reveal the voting blocs on council. It does, sort of. But at the same time, there is enough extra noise in the picture to make it exceptionally difficult to sort out who the key factions are. In fact, there is enough "yellow" in the graph to make it exceptionally vague. There is more signal lost to noise than there is signal here. That isn't overly surprising, though. At such a macro level as "all votes", it tells us precious little of real value. Coalitions of the nature that we would expect to form in a non-partisan council environment will shift and change based on the subject at hand.

A more fine-grained analysis which measures votes against different classes of motion would be far more informative.

Now the not so subtle bias comes to the surface. Note that the individual "who votes with whom" graphs are subtly weighted for visual impact. The "who votes with Nenshi" graph being displayed considerably larger than those for the individual aldermen.

We already know that the Manning Centre and their allies are out to disable Nenshi as much as possible. For all intents and purposes, this becomes essentially a hit-list of who to go after, during an election. Perhaps of some interest here is the fact that Pincott and Farrell, two of Calgary's Aldermen who are being targeted by the developers don't actually vote with Nenshi all that often overall. Again, it would be far more interesting to see this kind of information broken out along the lines of the type of motion involved.

The next couple of sections are more of the "meaningless aggregate statistics" which really tell you very little about the performance of any one individual on council, nor of the greater body of city council.

The last section I want to comment on is the "language complexity" analysis in the "Question Period" section of the report.

The Gunning Fog Index and the Flesch-Kincaid Grade Level are numerical techniques for analyzing the complexity of someone's writing (or speech). In my view, they are of exceptionally limited value in an analysis like this for several reasons:

1) Both tools ignore context. They tend to assume that the audience is somehow "the average joe" (I've never met this person myself - people are far too diverse). A given paragraph of text may well have a high complexity, but if there is appropriate context around it, the actual clarity may be very high.

2) Domain expertise. This is where these tools drive me nuts. In most domains, there is specific language that emerges, with very specialized meanings for each word. City Council no doubt has its own language of expertise as well. The algorithms that implement either of these indexes will inevitably complain quite loudly when there is domain jargon in it. Why? For the simple reason that the terminology brings a specific precision with it that only means something in that domain.

3) Your target audience isn't always a Calgary Sun newspaper reader (IIRC, the Sun targets a grade 5 reading level). Especially not in council sessions. The target audience there is going to be the other members of council first and foremost.

4) High complexity language isn't always bad, but that's what these tools end up reinforcing. I remember using an early Flesch-Kincaid implementation on a paper I wrote back in University. The paper was quite technical, and intended for an appropriately capable audience. The program complained bitterly about every line of the essay, fundamentally because it required a university level of reading ability to comprehend. Too bad for the program.

Once again, we find a subtle bit of bias creeping in here. By using a set of overly simplistic measures, and failing to wrap meaningful context around them, the Manning Centre's report basically suggests that some of Calgary's City Council talk at "too high a level".

I am of the opinion that this is fundamentally a pointless exercise. I want our elected officials using every ounce of their capability when managing this city. If that means that some of them use complex language, so be it. I remember being criticized once for writing e-mails that required one of the less literate managers to use a dictionary to figure out what I was saying - my response was much like what I'm writing here - 'too bad, deal with it'.

What I find interesting about this report is that its authors seem to think that they have shown something interesting. What they have really demonstrated is just how pointless statistical analysis of data can be if you blindly start ripping out the context.

Far more important when assessing the performance of your elected representatives are not a bunch of statistical parlour tricks, but more to the point issues - does the person respond to their constituents' issues as they are raised? How quickly? Do they try and bury the input of people they disagree with? Do their votes on council motions reflect the input from their constituencies? Do they proactively solicit that input?

I could go on. At the end of the day, it still boils down to yet another hack report from a think-tank with an agenda. It should be valued accordingly.

The first thing about this that I find interesting is that this appears to be a sign that the Manning Centre is going to start positioning itself in a manner similar to the Fraser Institute, which has spent much of the last twenty years spouting ideologically slanted "research" as if it is somehow unbiased and logical. The fact that this report's release coincides with the launch of the "Common Sense Calgary" campaign and the launch of Calgary's civic election suggests that there is an attempt to attack the current city council incumbents who don't align well with the Conservative cabal that wants to control all levels of politics.

The report is lengthy - some 38 pages, and filled with lots of statistical analysis of council meeting minutes. It is filled with lots of "pretty graphs", but graphs seldom tell the whole story - in fact, statistics themselves have proven to be a somewhat suspect tool in analyzing politics in general.

[More Detailed Analysis After The Jump]

The writing of this report is intentionally oblique. There are regular allusions to "factions" on council, but the members and nature of these factions are never laid out. It is filled with graphs that imply things, but never really provide any informative value.

The obliqueness serves a purpose. It enables the Manning Centre to claim that it is "non-partisan" and "unbiased". However, like any ideological think-tank operation, the Manning Centre (which, for the purposes of my writing includes the "Manning Foundation") has a known bias, and a desire to forward a particular worldview. The challenge then is to understand how the report forwards the Manning Centre's agenda without being explicit about it.

From the front page, Key Findings section, we find the following:

In systems with political parties, the public choice is simplified by candidates signing up for thevalues of a political party. In Calgary, and likely other cities, there is strong opposition to slates and parties entering the political sphere. An initiative such as this may offer the best of both worlds by helping to understand how independent candidates vote on the issues.Consider the wording here: "In systems with political parties, the public choice is simplified by candidates signing up for the values of a political party". It is no secret that there have been regular attempts by various groups to run "slates" of candidates in Calgary. The most recent attempts have been primarily made by various conservative activists. By far, the efforts of the Manning Centre and the developers this year are the best funded. Further, it is no secret in Calgary that the notion of partisan alignment is a powerful thing in voter's minds. It has long been joked that you could run a bale of hay under the Conservative banner and get it elected. Yet, when you take away the partisan labels, Calgarians end up voting remarkably centrist.

It would not surprise me at all if the Manning Centre, among others, would love to see the injection of party politics into civic level politics. This already happens in the United States, and the emergence of slates and the like no doubt are the beginning stages of that kind of polarization.

The last statement of the paragraph is also interesting "An initiative such as this may offer the best of both worlds by helping to understand how independent candidates vote on the issues". The wording is again somewhat ambiguous. Is the "initiative" being referred to the report itself, or is it the creation of slates and party-like structures? It could be either, but I will be charitable and assume that it is the report author referring to the report itself.

1. 19 per cent of Calgary council business is conducted in camera, and most council meetings (78%) proceeded with at least some portion being behind closed doors. The topics most commonly discussed in camera are issues relating to personnel, intergovernmental relations, and procurement, sales, and leases.2. Councilors’ attitudes toward taxing and spending vary strongly, with some routinely voting for less spending and others voting for more expenditures.3. there are two clear voting blocs on the Council that regularly vote together, one of which is much more successful than the other in getting motions passed. there is also a third group who vote more randomly in respect of other councillors.4. ten Council members get their way about 70% or more of the time while the remaining five get their preferred outcome much less often. Councilors’ odds in passing motions vary strongly, with some consistently winning and others usually voting against successful motions.5. Five councilors abstained themselves from Council business due to a pecuniary interest, with Councilor Mar accounting for most declarations of conflict.6. Most councilors will vote in favour of most of motions brought forward, while two in particular will usually vote against.

This list of key findings is an interesting mix of content. On one hand, it tries to play the scare card by leading off with the amount of council business that is done "behind closed doors". Frankly, having been a manager with significant responsibilities in the past, it is no surprise to me that the topics involved are done behind closed doors. Doing otherwise could negatively impact non-council stakeholders in the discussions such as companies bidding to do work.

If less than 20% of council business falls into that category, I'd say that's pretty good. Given that at the Federal level, Harper has driven almost all committee business behind closed doors, and he has reduced debates over legislation to almost meaningless pro forma acts. Far more happens in Ottawa when Parliament isn't sitting these days (under the guise of "order in council").

The second conclusion I find particularly ridiculous. The first point being that none of the data analysis presented further on in the report substantiates the claim. There are lots of graphs later on in the report, but none of the data breaks out voting on spending related subjects from other council business.

Items 3, 4 and 5 allude to the presence of "blocks" or "factions" on council. Some of the subsequent analysis attempts to demonstrate these voting blocks, but does so in a manner that is intended to make it very difficult to actually identify who is aligning with whom.

Delving into the data analysis, things get a little more complicated, and the use of weighting and words to influence the way that the reader would interpret the results becomes important. Consider the following graph regarding spending motions:

The title alone makes the implicit claim that spending is a bad thing, that it is somehow "unfriendly to taxpayers" if you vote for spending. This is nonsense. Governments need to spend money to build, maintain and repair infrastructure. Was it "unfriendly" to taxpayers to spend the millions of dollars needed to get Calgary back on its feet this past June? Of course not - it was essential that it happen.

Further, the choice of wording and scaling contains an implicit bias - if 100% is "always votes to reduce taxes and spending", that plays on the bias that is instilled on all of us during our school years - 100% is the "perfect "A"" score, something to strive for.

The Manning Centre makes the bald presupposition that spending is evil, and then proceeds to make an overly simplistic analysis of the data. We have no way of telling here how the various aldermen voted on particular issues, nor does the report lay out the criteria for selecting what votes to include or exclude in this analysis.

If, for example, Demong voted according to the wishes of his constituency consistently, what does it matter if he was on the "winning side" only 45.2% of the time?

You would almost hope that this would reveal the voting blocs on council. It does, sort of. But at the same time, there is enough extra noise in the picture to make it exceptionally difficult to sort out who the key factions are. In fact, there is enough "yellow" in the graph to make it exceptionally vague. There is more signal lost to noise than there is signal here. That isn't overly surprising, though. At such a macro level as "all votes", it tells us precious little of real value. Coalitions of the nature that we would expect to form in a non-partisan council environment will shift and change based on the subject at hand.

A more fine-grained analysis which measures votes against different classes of motion would be far more informative.

Now the not so subtle bias comes to the surface. Note that the individual "who votes with whom" graphs are subtly weighted for visual impact. The "who votes with Nenshi" graph being displayed considerably larger than those for the individual aldermen.

We already know that the Manning Centre and their allies are out to disable Nenshi as much as possible. For all intents and purposes, this becomes essentially a hit-list of who to go after, during an election. Perhaps of some interest here is the fact that Pincott and Farrell, two of Calgary's Aldermen who are being targeted by the developers don't actually vote with Nenshi all that often overall. Again, it would be far more interesting to see this kind of information broken out along the lines of the type of motion involved.

The next couple of sections are more of the "meaningless aggregate statistics" which really tell you very little about the performance of any one individual on council, nor of the greater body of city council.

The last section I want to comment on is the "language complexity" analysis in the "Question Period" section of the report.

The Gunning Fog Index and the Flesch-Kincaid Grade Level are numerical techniques for analyzing the complexity of someone's writing (or speech). In my view, they are of exceptionally limited value in an analysis like this for several reasons:

1) Both tools ignore context. They tend to assume that the audience is somehow "the average joe" (I've never met this person myself - people are far too diverse). A given paragraph of text may well have a high complexity, but if there is appropriate context around it, the actual clarity may be very high.

2) Domain expertise. This is where these tools drive me nuts. In most domains, there is specific language that emerges, with very specialized meanings for each word. City Council no doubt has its own language of expertise as well. The algorithms that implement either of these indexes will inevitably complain quite loudly when there is domain jargon in it. Why? For the simple reason that the terminology brings a specific precision with it that only means something in that domain.

3) Your target audience isn't always a Calgary Sun newspaper reader (IIRC, the Sun targets a grade 5 reading level). Especially not in council sessions. The target audience there is going to be the other members of council first and foremost.

4) High complexity language isn't always bad, but that's what these tools end up reinforcing. I remember using an early Flesch-Kincaid implementation on a paper I wrote back in University. The paper was quite technical, and intended for an appropriately capable audience. The program complained bitterly about every line of the essay, fundamentally because it required a university level of reading ability to comprehend. Too bad for the program.

Once again, we find a subtle bit of bias creeping in here. By using a set of overly simplistic measures, and failing to wrap meaningful context around them, the Manning Centre's report basically suggests that some of Calgary's City Council talk at "too high a level".

I am of the opinion that this is fundamentally a pointless exercise. I want our elected officials using every ounce of their capability when managing this city. If that means that some of them use complex language, so be it. I remember being criticized once for writing e-mails that required one of the less literate managers to use a dictionary to figure out what I was saying - my response was much like what I'm writing here - 'too bad, deal with it'.

What I find interesting about this report is that its authors seem to think that they have shown something interesting. What they have really demonstrated is just how pointless statistical analysis of data can be if you blindly start ripping out the context.

Far more important when assessing the performance of your elected representatives are not a bunch of statistical parlour tricks, but more to the point issues - does the person respond to their constituents' issues as they are raised? How quickly? Do they try and bury the input of people they disagree with? Do their votes on council motions reflect the input from their constituencies? Do they proactively solicit that input?

I could go on. At the end of the day, it still boils down to yet another hack report from a think-tank with an agenda. It should be valued accordingly.

No comments:

Post a Comment